Databases and more Databases….just store and retrieve?

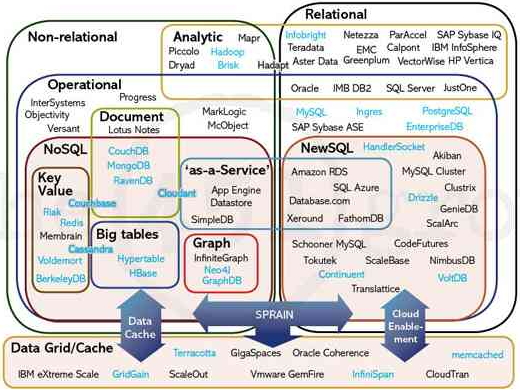

When I think of managing “Big Data”, I frequently think back to the graphic above from The 451 Group. So many nuances associated with the exercise of storing lots of data, accessing the data, and processing it.

The database management systems (DBMS) markets have begun to see some major changes. These changes are being driven by a number of factors such as increased use of clustering, virtualization, cloud computing, a rising tide of open source DBMS usage, new technologies and usage paradigms.

Developments are affecting both relational DBMS (RDBMS) and non-relational DBMS (NDBMS) vendors. The net effect on both markets will be to drive growth – per IDC, the worldwide revenue for non-relational and relational DBMS will grow from nearly $24.6 billion in 2010 to approximately $33.5 billion in 2014 for a compound annual growth rate of 7%. This isn’t the greatest growth rate, but the size of the market is compelling….especially for those database vendors hoping to grab some of the traditional relational DB pie.

With the new generation of web scale companies like Facebook, Google, Linkedin, Twitter, and the like, we find more and more companies using database technologies that weren’t practical in the past, such as in-memory databases, columnar databases, non-schematic (or so-called NoSQL) databases, as well as graph databases.

However, the world of the new….the “non-relational” or “NOSQL” or “NEWSQL” will need to display a few characteristics – most notably:

- Show that they really understand the relational model.

- Show a problem they can solve that relational does not.

- Do the things that a real RDBMS should be able to do, such as: ad hoc queries, views, integrity constraints, security and recovery.

This isn’t just my opinion, but also of people like Chris Date (not only an author of “An Introduction to Database Systems” which is what I studied as part of my Teradata curriculum, but an expert in mathematics and many of the advanced principals in relational theory). And then there’s one other barrier to entry – selling into established and SQL-happy IT groups.

Graph Databases – A Niche?

I’ve been intrigued by the new graph databases recently. So, naturally, I’ve been interested in finding out what these offerings provide.

A graph database is a type of database, which, unlike a relational database, can be deployed with or without advance knowledge of the data structure. This isn’t much different than Hadoop and MapReduce (classified as an analytical database). Unlike most non-schematic databases, which operate on a key-value pair basis, a graph database has a schema at the metastructural level, defining data organization in terms of multifaceted recursive relationships.

Such a database is used for discovering and traversing nodes (or vertices) linked by edges (or associations) to reveal relationships at multiple levels of separation. Such structures are more flexible than any that can be represented relationally (which cannot natively support recursive relationships), and the edges enable much more rapid traversal of relationship structures than is possible through relational joins.

So what’s the big deal?

Traditional relational databases have schemas requiring predefined relationships and are not well designed to discover relationships. Any relationships have to be known beforehand. Relational databases are very good for processing data that is already well defined; they can’t be used to discover the structure of data that hasn’t been previously defined.

Also, traversing data structures in a relational database is inherently less efficient because a relational database requires column value comparisons to resolve joins. Such traversals are achieved, at best, through correlative index lookups and, at worst, through table scans, both of which require many more computer operations than a simple direct navigation through a graph DB.

The benefit of a graph database is that it has the ability to discover the structure of data and can then support complex relationships because the underlying system is capable of a recursive relationship — something that relational databases simply can’t do.

What this means is that an object of a given type can refer directly to another object of the same type, and those connections can be traversed and analyzed, and deeper relationships discovered.

Examples include discovering patterns of associations and interests among “friends” in a social network, identifying commonality among persons of interest in a criminal investigation, discovering patterns of activity among cell phone users, and mining search patterns among online search service users based on their common characteristics, among many others.

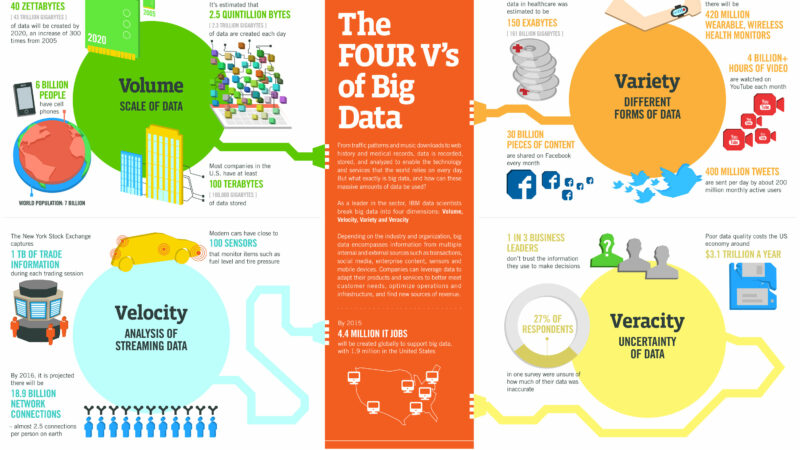

As IDC noted in its 2011 Digital Universe Study, 90% of the data in the digital universe is unstructured, where you don’t know the relationship structure prior to loading the data. Sounds like the graph DB guys might be on to something here.

The underlying structure of a graph database supports this notion of discovering relationships. The actual patterns of data can be imposed on top of that structure so that you can discover relationships. For example, if you’re tracking persons of interest in a criminal investigation, you have lots of data about a person and his or her known associates and all of those people and their known associates and so forth. In a graph database, you can put in all that information and then you can discover if there is a secondary or tertiary association between one person of interest and another, which police could use to determine whether or not a person might have been involved in an incident that they’re investigating.

So I ask again, why not just use something like Hadoop and MapReduce? If your purpose is to discover patterns of relationships and then be able to traverse those in different ways, you can use a simple key-value paired database to load in data.

The graph DB vendors would say that such an approach involves a fairly sophisticated recursive programming, and the result would be an application in which all the logic of relationship traversal is buried in fairly dense code, making maintenance difficult.

Market research efforts aimed at establishing relationships between customers’ buying patterns and product consumption patterns — essentially where you’re dealing with multiple types of things that you need to find associations for — are great applications for graph databases. You might be looking at, for example, patterns of Web page access by different types of customers in order to come up with a profile that you would use to fine-tune how you set up a Web page or what ads you put on a Web page.

2 thoughts on “Databases and more Databases….just store and retrieve?”

Comments are closed.