The Best Use-Cases For Big Graph

The best Big Graph problems will involve the following characteristics:

- Discovery-centric problems (little is known about the data ahead of time): This starts with “data discovery” which then leads to “knowledge discovery” and then “business discovery”.

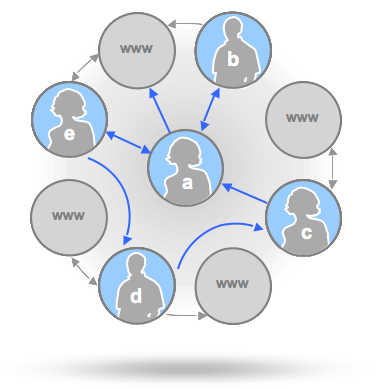

- Data with strong associations (relationships will be key): which comes from various sources that have relationships with each other and maintain this context so as to be useful to humans and computers. Once data is linked, a relationship in that data persists from that point forward.

- Involves time-series (events / relationships over time): All customer interactions with a company’s products and services consists of a collection of events. But it’s the sequence of events and the associated patterns which present the opportunity of understanding and predicting how to maximize the “experience”.

- Real-time data & response required: which focuses on what is happening right now, not on what has already happened. It enables situational awareness. Real-time data raises the issue of perishable data (data freshness) and “orphaned” data (which no longer has valid use cases but continues in use nonetheless; this is different form orphaned data that has lost integrity).

- Heavy web & mobile components: When the Industry says that over 50% of purchases are influenced by online interactions/experiences (web or mobile), do I need to say more?

If you are wondering what “Big Graph” is, then read my post on “Big Data and Graph = Big Graph“.

To be honest. I don’t think I’d want to focus on any enterprise problems which didn’t exhibit these characteristics. Frankly, they seem to inherently describe the problems/opportunities with the highest enterprise value.

Here’s a list of a few use-cases to consider…not all of which exhibit all five characteristics above:

- Social network who’s connected to whom, who you should know, recommendations, influencers, etc.

- Analyze spatial information for telecom call records…clusters, social connections, influencers, etc.

- LBS analysis for wireless carrier’s customers usage patterns (over time and place)

- Analyze ACH information for banks for fraud detection

- Transportation/delivery application = routing optimization

- Datacenter management/provisioning system

- Network topology analysis/optimization

- Cell tower utilization/optimization

- Genome sequencing

- Surveillance & threats

- Image recognition

- Asset management

- Protein folding

Have any others? Which one is the “killer app” in your mind?

Experience Mining Wins!

I shouldn’t have to make a case on the fact that we have moved into the “experience economy”. Matter of fact, Joseph Pine and James Gilmore wrote a book on it back in 1999, called “The Experiene Economy: Work is Theater & Every Business a Stage“.

I shouldn’t have to make a case on the fact that we have moved into the “experience economy”. Matter of fact, Joseph Pine and James Gilmore wrote a book on it back in 1999, called “The Experiene Economy: Work is Theater & Every Business a Stage“.

The question is how do you know what your customers, employees, competitors, vendors are “experiencing” given how connected they are these days? And if you did know, could you react to the information you had fast enough?

I’d also argue that few still even know WHAT the experiences are, let alone WHY they present the results they do. Here’s my process involved in “experience mining”:

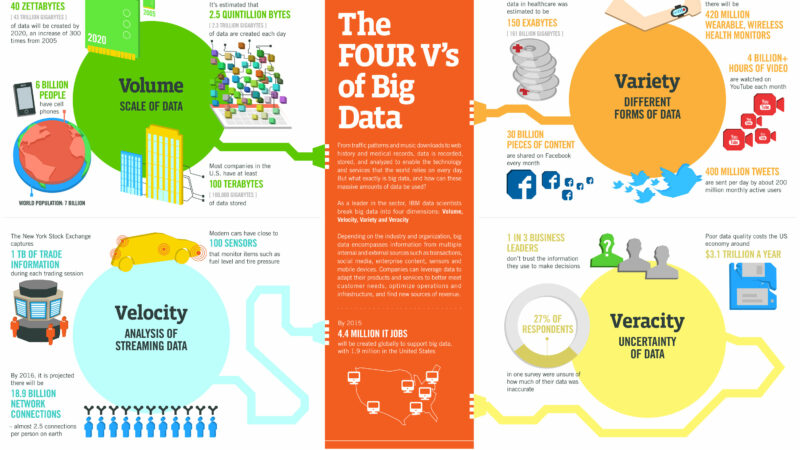

- Determine the “What” by collecting both structured and unstructured data from as many sources possible. Big Data technologies support this with less of an IT budget impact.

- Analyze the “Why” with new Business Intelligence tools. This is where Big Analytics comes in.

- Perform some “What If” scenarios, using simulation tools that allow business users to get an idea of impact related to change.

- Forecast “What Will” by applying simple analytics to lots of data. Prediction is the path to realizing value from your knowledge discovery.

- Automate “How” by incorporating response into your systems such that action can be taken without human interaction. Optimize the experiences automatically.

Example around “What” Experiences Happened?

- How many steps did each of my customers take BEFORE purchasing my product/service?

- How many of them are connected to each other (via their social graph)?

- How similar are they relative to their sequence of steps taken?

- How much time did they spend on each step and/or between each step?

Examples on the “Why”?

- Why do most people stop the sequence at step 10?

- Why do the people with the shortest path to a successful purchase exhibit the greatest interaction on social networks?

- Why did most everyone initiating their search at time X, end up buying something within one week?

- Why do people who tweet about a product AND shop within one week of that tweet, have a 50% more likely chance of buying it?

Examples of the “What If”?

- What if I were to remove steps 6 & 7 from the experience?

- What if I were to initiate a tweet campaign after step 5? Email campaign?

- What if we incentivized them to reduce time spent on step 10?

- What if we targeted people with the buyers’ profile AND intentionally navigated them through the same purchase sequence?

Examples of “What Will Happen”?

- When we guide users to page X, then page Y, then initiate a tweet discount campaign within one day, then follow up with an email campaign within two days, they will be 90% more likely to buy.

Examples of “How” to Automate?

Multi-channel campaign marketing (MCCM) platforms become highly important for retailers/etailers’ ability to automatically respond to real-time data (assuming you already understand what to look/listen for). Solutions like real-time recommendation engines/services are an example of one of many real-time response approaches. Placing “listeners” on your customers’ experience graph, allows you to respond to good prospect experiences (whether you are trying to replace bad experiences with good, or nurture good experiences along faster, or simply create more good experiences).