Customized, Intelligent, Vertical Applications – the future of Big Data?

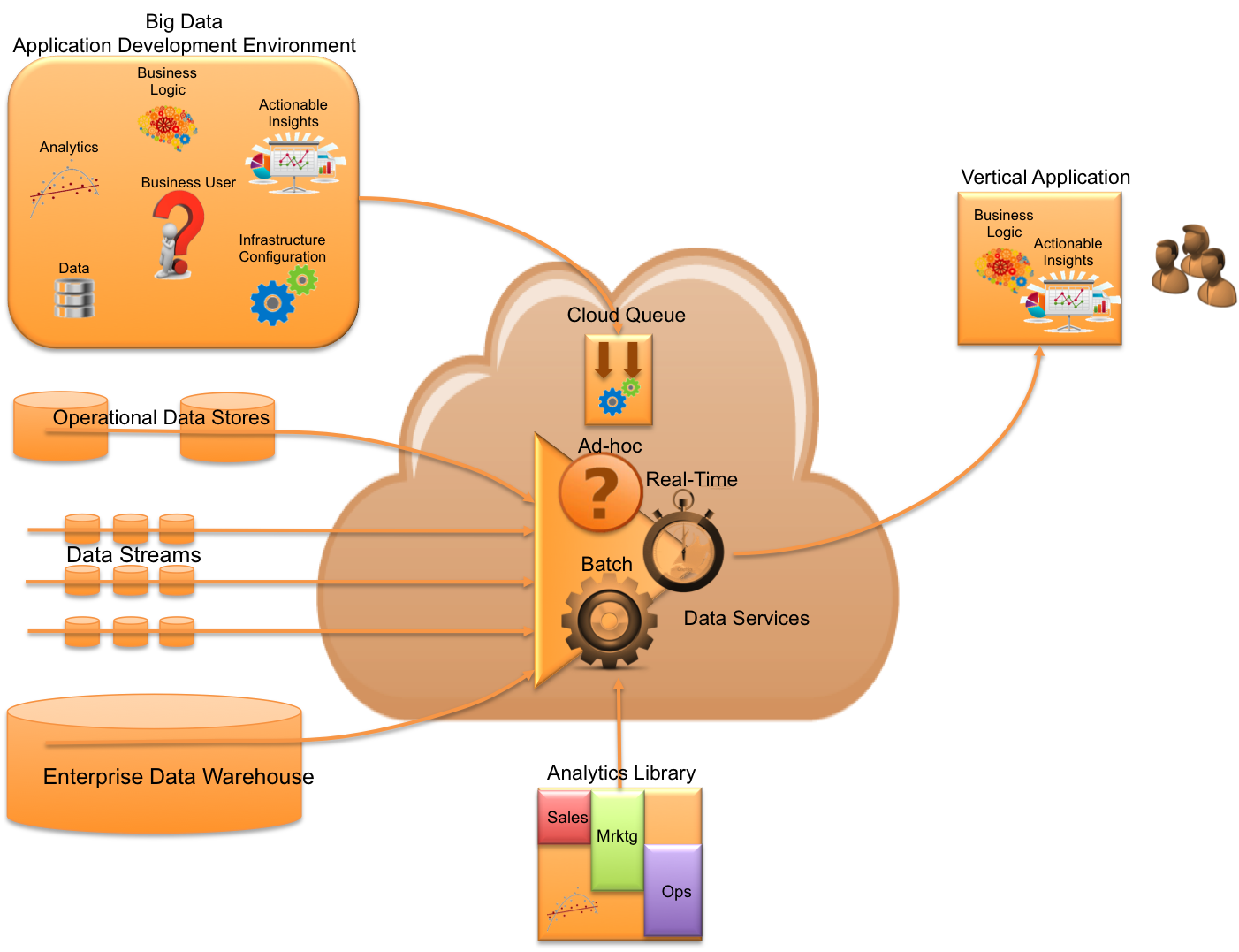

The Ideal Big Data Application Development Environment

Lets assume that your entire organization had access to the following building blocks:

- Data: All sources of data from the enterprise (at rest and in motion)

- Analytics: Queries, Algorithms, Machine Learning Models

- Application Business Logic: Domain specific use-cases / business problems

- Actionable Insights: Knowledge of how to apply analytics against data through the use of application business logic to produce a positive impact to the business

- Infrastructure Configuration: High scalable, distributed, enterprise-class infrastructure capable of combining data, analytics, with app logic to produce actionable insights

Imagine if your entire organization was empowered to produce data-driven applications tailored specifically for your vertical use-cases?

Data-Driven Vertical Apps

You are a regional bank who is under heavier regulation, focused on risk management, and expanding your mobile offerings. You are seeking ways to get ahead of your competition through the use of Big Data by optimizing financial decisions and yields.

You are a regional bank who is under heavier regulation, focused on risk management, and expanding your mobile offerings. You are seeking ways to get ahead of your competition through the use of Big Data by optimizing financial decisions and yields.

What if there was an easy and automated way to define new data sources, create new algorithms, apply these to gain better insight into your risk position, and ultimately operationalize all this by improving your ability to reject and accept loans?

You are a retailer who is being affected by the economic downturn, demographic shifts, and new competition from online sources. You are seeking ways of leveraging the fact that your customers are empowered by mobile and social by transforming the shopping experience through the use of Big Data.

You are a retailer who is being affected by the economic downturn, demographic shifts, and new competition from online sources. You are seeking ways of leveraging the fact that your customers are empowered by mobile and social by transforming the shopping experience through the use of Big Data.

What if there was an easy and automated way to capture all customer touch points, create new segmentation and customer experience analytics, apply these to create a customized cross-channel solution which integrates online shopping with social media, personalized promotions, and relevant content?

You are a fixed line operator, wireless network provider, or fixed broadband provider who is in the middle of convergence of both services and networks, and feeling price pressures of existing services. You are seeking ways to leverage cloud and Big Data to create smarter networks (autonomous and self-analyzing), smarter operations (improving working efficiency and capacity of day-to-day operations), and ways to leverage subscriber demographic data to create new data products and services to partners.

You are a fixed line operator, wireless network provider, or fixed broadband provider who is in the middle of convergence of both services and networks, and feeling price pressures of existing services. You are seeking ways to leverage cloud and Big Data to create smarter networks (autonomous and self-analyzing), smarter operations (improving working efficiency and capacity of day-to-day operations), and ways to leverage subscriber demographic data to create new data products and services to partners.

What if there was an easy and automated way to start by consuming additional data across the organization, deploy segmentation analytics to better target customers and increase ARPU?

It Starts With The “Infrastructure Recipe”

Ok. You are a member of the application development team. All you have to do is create a data driven application “deploy package”. It’s your recipe of what data sources, analytics, and application logic needed to insert into this magical cloud service which produces your industry and use-case specific application. You don’t need to be an analytics expert; you don’t need to be a DBA; an ETL expert; let alone a Big Data technologist. All you need is a clear understanding of your business problem and you can assemble the parts through a simple-to-use “recipe” which is abstracted from the details of the infrastructure used to execute on that recipe.

Ok. You are a member of the application development team. All you have to do is create a data driven application “deploy package”. It’s your recipe of what data sources, analytics, and application logic needed to insert into this magical cloud service which produces your industry and use-case specific application. You don’t need to be an analytics expert; you don’t need to be a DBA; an ETL expert; let alone a Big Data technologist. All you need is a clear understanding of your business problem and you can assemble the parts through a simple-to-use “recipe” which is abstracted from the details of the infrastructure used to execute on that recipe.

Any Data Source

Imagine an environment where your enterprise data is at your fingertips. No heavy ETL tools, no database exports, no Hadoop flume or sqoop jobs. Access to data is as simple as defining “nouns” in a sentence. Where your data lives is not a worry. You are equipped with the magic ability to simply define what the data source is and where it lives and accessing it is automated. You also care less whether the data is some large historic volume living in a relational database or whether it is real-time streaming event data.

Imagine an environment where your enterprise data is at your fingertips. No heavy ETL tools, no database exports, no Hadoop flume or sqoop jobs. Access to data is as simple as defining “nouns” in a sentence. Where your data lives is not a worry. You are equipped with the magic ability to simply define what the data source is and where it lives and accessing it is automated. You also care less whether the data is some large historic volume living in a relational database or whether it is real-time streaming event data.

Analytics Made Easy

Imagine a world where you can pick from literally thousands of algorithms and apply them to any of the above data sources in part or in combination. You create one algorithm and can apply it to years of historic data and/or a stream of live real-time data. Also, imagine a world where configuring your data in a format that your algorithms can consume is made seamless. Lastly, your algorithms execute on infrastructure in a parallel, distributed, highly scalable way. Getting excited yet?

Imagine a world where you can pick from literally thousands of algorithms and apply them to any of the above data sources in part or in combination. You create one algorithm and can apply it to years of historic data and/or a stream of live real-time data. Also, imagine a world where configuring your data in a format that your algorithms can consume is made seamless. Lastly, your algorithms execute on infrastructure in a parallel, distributed, highly scalable way. Getting excited yet?

Focus on Applications With Actionable Insights

Now lets embody this combination of analytics and data in a way that can actually be consumed and acted upon. Imagine a world where you can produce your insights and report on them with your BI tool of choice. That’s kind of exciting.

Now lets embody this combination of analytics and data in a way that can actually be consumed and acted upon. Imagine a world where you can produce your insights and report on them with your BI tool of choice. That’s kind of exciting.

But what’s even more exciting is the ability to deploy your insights operationally through an application which leverages your domain expertise and understanding of the business logic associated with the targeted use-case you are solving against. Translation – you can code up a Java, Python, PHP, or Ruby application which is light, simple, and easy to build/maintain. Why? Because the underlying logic normally embedded in ETL tools, separate analytics software tools, MapReduce code, NoSQL queries, and stream processing logic is pushed up into the hands of application developer. Drooling yet? Wait, it gets better.

Big Data, Cloud, and The Enterprise

Lets take this entire programming paradigm and automate it within an elastic cloud service purpose built for the organization with the ability to submit your application “deploy packages” to be instantly processed without having to understand the compute infrastructure and, better yet, without having to understand the underlying data analytic services required to process your various data sources in real-time, near real-time, or in batch modes.

Lets take this entire programming paradigm and automate it within an elastic cloud service purpose built for the organization with the ability to submit your application “deploy packages” to be instantly processed without having to understand the compute infrastructure and, better yet, without having to understand the underlying data analytic services required to process your various data sources in real-time, near real-time, or in batch modes.

Ok…if we had such an environment, we’d all be producing a ton of next-generation applications…..data-driven, highly intelligent, and specific to our industry and use-cases.

I’m ready…are you?

Hi kwiaciarnia,

I haven’t approved any comments from you or others on this blog post….strange. Let me look into this. Jim